/cdn.vox-cdn.com/uploads/chorus_asset/file/24195246/dreamup_creation_by_thedextriarchy_dfhwouu_pre.jpeg)

Artificial intelligence is learning to make art, and nobody has quite figured out how to handle it — including DeviantArt, one of the best-known homes for artists on the internet. Last week, DeviantArt decided to step into the minefield of AI image generation, launching a tool called DreamUp that lets anyone make pictures from text prompts. It’s part of a larger DeviantArt attempt to give more control to human artists, but it’s also created confusion — and, among some users, anger.

DreamUp is based on Stable Diffusion, the open-source image-spawning program created by Stability AI. Anyone can sign into DeviantArt and get five prompts for free, and people can buy between 50 and 300 per month with the site’s Core subscription plans, plus more for a per-prompt fee. Unlike other generators, DreamUp has one distinct quirk: it’s built to detect when you’re trying to ape another artist’s style. And if the artist objects, it’s supposed to stop you.

“AI is not something that can be avoided. The technology is only going to get stronger from day to day,” says Liat Karpel Gurwicz, CMO of DeviantArt. “But all of that being said, we do think that we need to make sure that people are transparent in what they’re doing, that they’re respectful of creators, that they’re respectful of creators’ work and their wishes around their work.”

“AI is not something that can be avoided.”

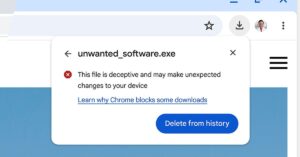

Contrary to some reporting, Gurwicz and DeviantArt CEO Moti Levy tell The Verge that DeviantArt isn’t doing (or planning) DeviantArt-specific training for DreamUp. The tool is vanilla Stable Diffusion, trained on whatever data Stability AI had scraped at the point DeviantArt adopted it. If your art was used to train the model DreamUp uses, DeviantArt can’t remove it from the Stability dataset and retrain the algorithm. Instead, DeviantArt is addressing copycats from another angle: banning the use of certain artists’ names (as well as the names of their aliases or individual creations) in prompts. Artists can fill out a form to request this opt-out, and they’ll be approved manually.

Controversially, Stable Diffusion was trained on a huge collection of web images, and the vast majority of the creators didn’t agree to inclusion. One result is that you can often reproduce an artist’s style by adding a phrase like “in the style of” to the end of the prompt. It’s become an issue for some contemporary artists and illustrators who don’t want automated tools copying their distinctive looks — either for personal or professional reasons.

These problems crop up across other AI art platforms, too. Among other factors, questions about consent have led web platforms, including ArtStation and Fur Affinity, to ban AI-generated work entirely. (The stock images platform Getty also banned AI art, but it’s simultaneously partnered with Israeli firm Bria on AI-powered editing tools, marking a kind of compromise on the issue.)

DeviantArt has no such plans. “We’ve always embraced all types of creativity and creators. We don’t think that we should censor any type of art,” Gurwicz says.

Instead, DreamUp is an attempt to mitigate the problems — primarily by limiting direct, intentional copying without permission. “I think today that, unfortunately, there aren’t any models or data sets that were not trained without creators’ consent,” says Gurwicz. (That’s certainly true of Stable Diffusion, and it’s likely true of other big models like DALL-E, although the full dataset of these models sometimes isn’t known at all.)

“We knew that whatever model we would start working with would come with this baggage,” he continued. “The only thing we can do with DreamUp is prevent people also taking advantage of the fact that it was trained without creators’ consent.”

If an artist is fine with being copied, DeviantArt will nudge users to credit them. When you post a DreamUp image through DeviantArt’s site, the interface asks if you’re working in the style of a specific artist and asks for a name (or multiple names) if so. Acknowledgment is required, and if someone flags a DreamUp work as improperly tagged, DeviantArt can see what prompt the creator used and make a judgment call. Works that omit credit, or works that intentionally evade a filter with tactics like misspellings of a name, can be taken down.

This approach seems helpfully pragmatic in some ways. While it doesn’t address the abstract issue of artists’ work being used to train a system, it blocks the most obvious problem that issue creates.

“Whatever model we would start working with would come with this baggage.”

Still, there are several practical shortcomings. Artists have to know about DreamUp and understand they can submit requests to have their names blocked. The system is aimed primarily at granting control to artists on the platform rather than non-DeviantArt artists who vocally object to AI art. (I was able to create works in the style of Greg Rutkowski, who has publicly stated his dislike of being used in prompts.) And perhaps most importantly, the blocking only works on DeviantArt’s own generator. You can easily switch to another Stable Diffusion implementation and upload your work to the platform.

Alongside DreamUp, DeviantArt has rolled out a separate tool meant to address the underlying training question. The platform added an optional flag that artists can tick to indicate whether they want to be included in AI training datasets. The “noai” flag is meant to create certainty in the murky scraping landscape, where artists’ work is typically treated as fair game. Because the tool’s design is open-source, other art platforms are free to adopt it.

DeviantArt isn’t doing any training itself, as mentioned before. But other companies and organizations must respect this flag to comply with DeviantArt’s terms of service — at least on paper. In practice, however, it seems mostly aspirational. “The artist will signal very clearly to those datasets and to those platforms whether they gave their consent or not,” says Levy. “Now it’s on those companies, whether they want to make an effort to look for that content or not.” When I spoke with DeviantArt last week, no AI art generator had agreed to respect the flag going forward, let alone retroactively remove images based on it.

At launch, the flag did exactly what DeviantArt hoped to avoid: it made artists feel like their consent was being violated. It started as an opt-out system that defaulted to giving permission for training, asking them to set the flag if they objected. The decision probably didn’t have much immediate effect since companies scraping these images was already the status quo. But it infuriated some users. One popular tweet from artist Ian Fay called the move “extremely scummy.” Artist Megan Rose Ruiz released a series of videos criticizing the decision. “This is going to be a huge problem that’s going to affect all artists,” she said.

The outcry was particularly pronounced because DeviantArt has offered tools that protect artists from some other tech that many are ambivalent toward, particularly non-fungible tokens, or NFTs. Over the past year, it’s launched and since expanded a program for detecting and removing art that was used for NFTs without permission.

DeviantArt has since tried to address criticism of its new AI tools. It’s set the “noai” flag on by default, so artists have to explicitly signal their agreement to have images scraped. It also updated its terms of service to explicitly order third-party services to respect artists’ flags.

But the real problem is that, especially without extensive AI expertise, smaller platforms can only do so much. There’s no clear legal guidance around creators’ rights (or copyright in general) for generative art. The agenda so far is being set by fast-moving AI startups like OpenAI and Stability, as well as tech giants like Google. Beyond simply banning AI-generated work, there’s no easy way to navigate the system without touching what’s become a third rail to many artists. “This is not something that DeviantArt can fix on our own,” admits Gurwicz. “Until there’s proper regulation in place, it does require these AI models and platforms to go beyond just what is legally required and think about, ethically, what’s right and what’s fair.”

For now, DeviantArt is making an effort to stimulate that line of thinking — but it’s still working out some major kinks.