/cdn.vox-cdn.com/uploads/chorus_asset/file/24691835/FxZSDk7XwAM9rh3.jpeg)

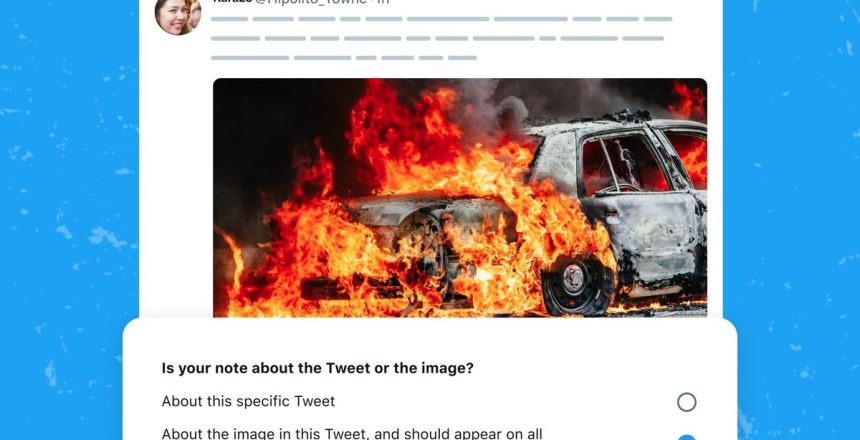

Twitter is expanding its crowdsourced fact-checking program to include images, shortly after a fake image went viral claiming to show an “explosion” near the Pentagon.

Community Notes, which are user-generated and appear below tweets, were introduced to add context to potentially misleading content on Twitter. Now contributors will be able to add information specifically related to an image, and that context will populate below “recent and future matching images,” according to the company.

In the announcement, Twitter specifically mentions AI-generated fake images, which have been a combination of confusing, frightening, and amusing as they’ve gone viral on the platform. For instance, a fake Pentagon explosion picture was shared by accounts with a blue verified checkmark last week, one of which pretended to be affiliated with Bloomberg News. In a less frightening example, the AI-generated image of Pope Francis looking like a streetwear hype beast circulated and went viral before many people learned it was fake.

Twitter Community Notes users will be able to specify if they’re adding context to the tweet itself or to the image that’s featured in it. So far, the feature only applies to single images, though the company says it’s working on expanding it to videos and posts with multiple images. Twitter warns that the notes likely won’t appear below all matching images as it will “err on the side of precision” initially.